Oversamplers¶

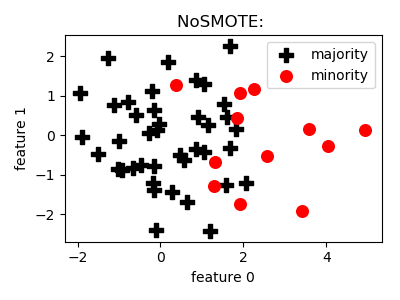

NoSMOTE¶

API¶

-

class

smote_variants.NoSMOTE(random_state=None)[source]¶ -

__init__(random_state=None)[source]¶ Constructor of the NoSMOTE object.

Parameters: random_state (int/np.random.RandomState/None) – dummy parameter for the compatibility of interfaces

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.NoSMOTE()

>>> X_samp, y_samp= oversampler.sample(X, y)

The goal of this class is to provide a functionality to send data through on any model selection/evaluation pipeline with no oversampling carried out. It can be used to get baseline estimates on preformance.

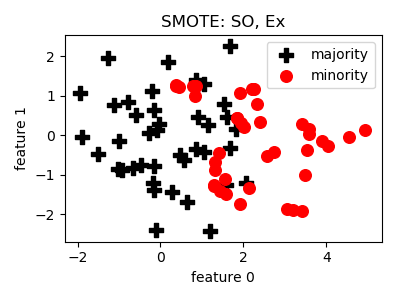

SMOTE¶

API¶

-

class

smote_variants.SMOTE(proportion=1.0, n_neighbors=5, n_jobs=1, random_state=None)[source]¶ -

__init__(proportion=1.0, n_neighbors=5, n_jobs=1, random_state=None)[source]¶ Constructor of the SMOTE object

Parameters: - proportion (float) – proportion of the difference of n_maj and n_min to sample e.g. 1.0

- that after sampling the number of minority samples will (means) – be equal to the number of majority samples

- n_neighbors (int) – control parameter of the nearest neighbor technique

- n_jobs (int) – number of parallel jobs

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.SMOTE()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@article{smote, author={Chawla, N. V. and Bowyer, K. W. and Hall, L. O. and Kegelmeyer, W. P.}, title={{SMOTE}: synthetic minority over-sampling technique}, journal={Journal of Artificial Intelligence Research}, volume={16}, year={2002}, pages={321--357} }

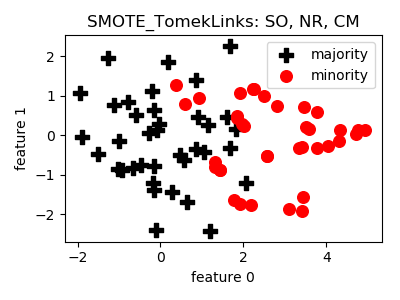

SMOTE_TomekLinks¶

API¶

-

class

smote_variants.SMOTE_TomekLinks(proportion=1.0, n_neighbors=5, n_jobs=1, random_state=None)[source]¶ -

__init__(proportion=1.0, n_neighbors=5, n_jobs=1, random_state=None)[source]¶ Constructor of the SMOTE object

Parameters: - proportion (float) – proportion of the difference of n_maj and n_min to sample e.g. 1.0 means that after sampling the number of minority samples will be equal to the number of majority samples

- n_neighbors (int) – control parameter of the nearest neighbor technique

- n_jobs (int) – number of parallel jobs

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.SMOTE_TomekLinks()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@article{smote_tomeklinks_enn, author = {Batista, Gustavo E. A. P. A. and Prati, Ronaldo C. and Monard, Maria Carolina}, title = {A Study of the Behavior of Several Methods for Balancing Machine Learning Training Data}, journal = {SIGKDD Explor. Newsl.}, issue_date = {June 2004}, volume = {6}, number = {1}, month = jun, year = {2004}, issn = {1931-0145}, pages = {20--29}, numpages = {10}, url = {http://doi.acm.org/10.1145/1007730.1007735}, doi = {10.1145/1007730.1007735}, acmid = {1007735}, publisher = {ACM}, address = {New York, NY, USA}, }

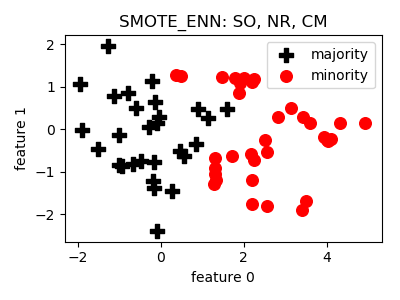

SMOTE_ENN¶

API¶

-

class

smote_variants.SMOTE_ENN(proportion=1.0, n_neighbors=5, n_jobs=1, random_state=None)[source]¶ -

__init__(proportion=1.0, n_neighbors=5, n_jobs=1, random_state=None)[source]¶ Constructor of the SMOTE object

Parameters: - proportion (float) – proportion of the difference of n_maj and n_min to sample e.g. 1.0 means that after sampling the number of minority samples will be equal to the number of majority samples

- n_neighbors (int) – control parameter of the nearest neighbor technique

- n_jobs (int) – number of parallel jobs

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.SMOTE_ENN()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@article{smote_tomeklinks_enn, author = {Batista, Gustavo E. A. P. A. and Prati, Ronaldo C. and Monard, Maria Carolina}, title = {A Study of the Behavior of Several Methods for Balancing Machine Learning Training Data}, journal = {SIGKDD Explor. Newsl.}, issue_date = {June 2004}, volume = {6}, number = {1}, month = jun, year = {2004}, issn = {1931-0145}, pages = {20--29}, numpages = {10}, url = {http://doi.acm.org/10.1145/1007730.1007735}, doi = {10.1145/1007730.1007735}, acmid = {1007735}, publisher = {ACM}, address = {New York, NY, USA}, }

- Notes:

- Can remove too many of minority samples.

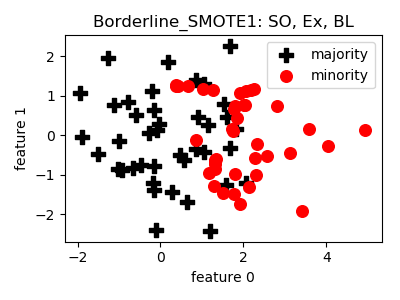

Borderline_SMOTE1¶

API¶

-

class

smote_variants.Borderline_SMOTE1(proportion=1.0, n_neighbors=5, k_neighbors=5, n_jobs=1, random_state=None)[source]¶ -

__init__(proportion=1.0, n_neighbors=5, k_neighbors=5, n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object

Parameters: - proportion (float) – proportion of the difference of n_maj and n_min to sample e.g. 1.0 means that after sampling the number of minority samples will be equal to the number of majority samples

- n_neighbors (int) – control parameter of the nearest neighbor technique for determining the borderline

- k_neighbors (int) – control parameter of the nearest neighbor technique for sampling

- n_jobs (int) – number of parallel jobs

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.Borderline_SMOTE1()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@InProceedings{borderlineSMOTE, author="Han, Hui and Wang, Wen-Yuan and Mao, Bing-Huan", editor="Huang, De-Shuang and Zhang, Xiao-Ping and Huang, Guang-Bin", title="Borderline-SMOTE: A New Over-Sampling Method in Imbalanced Data Sets Learning", booktitle="Advances in Intelligent Computing", year="2005", publisher="Springer Berlin Heidelberg", address="Berlin, Heidelberg", pages="878--887", isbn="978-3-540-31902-3" }

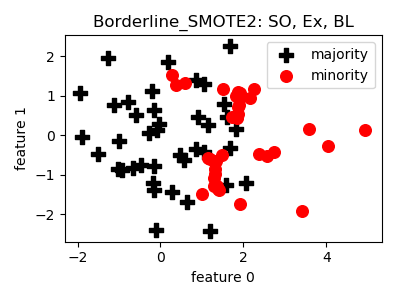

Borderline_SMOTE2¶

API¶

-

class

smote_variants.Borderline_SMOTE2(proportion=1.0, n_neighbors=5, k_neighbors=5, n_jobs=1, random_state=None)[source]¶ -

__init__(proportion=1.0, n_neighbors=5, k_neighbors=5, n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object

Parameters: - proportion (float) – proportion of the difference of n_maj and n_min to sample e.g. 1.0 means that after sampling the number of minority samples will be equal to the number of majority samples

- n_neighbors (int) – control parameter of the nearest neighbor technique for determining the borderline

- k_neighbors (int) – control parameter of the nearest neighbor technique for sampling

- n_jobs (int) – number of parallel jobs

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.Borderline_SMOTE2()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@InProceedings{borderlineSMOTE, author="Han, Hui and Wang, Wen-Yuan and Mao, Bing-Huan", editor="Huang, De-Shuang and Zhang, Xiao-Ping and Huang, Guang-Bin", title="Borderline-SMOTE: A New Over-Sampling Method in Imbalanced Data Sets Learning", booktitle="Advances in Intelligent Computing", year="2005", publisher="Springer Berlin Heidelberg", address="Berlin, Heidelberg", pages="878--887", isbn="978-3-540-31902-3" }

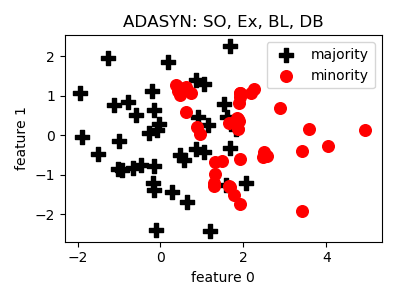

ADASYN¶

API¶

-

class

smote_variants.ADASYN(n_neighbors=5, d_th=0.9, beta=1.0, n_jobs=1, random_state=None)[source]¶ -

__init__(n_neighbors=5, d_th=0.9, beta=1.0, n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object

Parameters: - n_neighbors (int) – control parameter of the nearest neighbor component

- d_th (float) – tolerated deviation level from balancedness

- beta (float) – target level of balancedness, same as proportion in other techniques

- n_jobs (int) – number of parallel jobs

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.ADASYN()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@inproceedings{adasyn, author={He, H. and Bai, Y. and Garcia, E. A. and Li, S.}, title={{ADASYN}: adaptive synthetic sampling approach for imbalanced learning}, booktitle={Proceedings of IJCNN}, year={2008}, pages={1322--1328} }

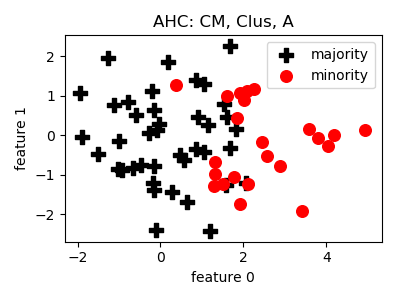

AHC¶

API¶

-

class

smote_variants.AHC(strategy='min', n_jobs=1, random_state=None)[source]¶ -

__init__(strategy='min', n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object

Parameters:

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

classmethod

parameter_combinations(raw=False)[source]¶ Generates reasonable paramter combinations.

Returns: a list of meaningful paramter combinations Return type: list(dict)

-

sample(X, y)[source]¶ Does the sample generation according to the class paramters.

Parameters: - X (np.ndarray) – training set

- y (np.array) – target labels

Returns: the extended training set and target labels

Return type: (np.ndarray, np.array)

-

Example¶

>>> oversampler= smote_variants.AHC()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@article{AHC, title = "Learning from imbalanced data in surveillance of nosocomial infection", journal = "Artificial Intelligence in Medicine", volume = "37", number = "1", pages = "7 - 18", year = "2006", note = "Intelligent Data Analysis in Medicine", issn = "0933-3657", doi = "https://doi.org/10.1016/j.artmed.2005.03.002", url = {http://www.sciencedirect.com/science/article/ pii/S0933365705000850}, author = "Gilles Cohen and Mélanie Hilario and Hugo Sax and Stéphane Hugonnet and Antoine Geissbuhler", keywords = "Nosocomial infection, Machine learning, Support vector machines, Data imbalance" }

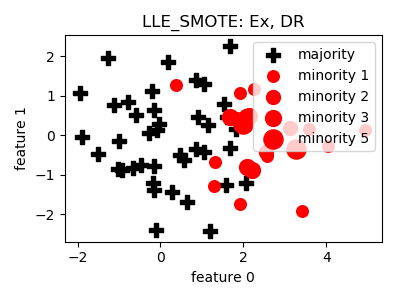

LLE_SMOTE¶

API¶

-

class

smote_variants.LLE_SMOTE(proportion=1.0, n_neighbors=5, n_components=2, n_jobs=1, random_state=None)[source]¶ -

__init__(proportion=1.0, n_neighbors=5, n_components=2, n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object

Parameters: - proportion (float) – proportion of the difference of n_maj and n_min to sample e.g. 1.0 means that after sampling the number of minority samples will be equal to the number of majority samples

- n_neighbors (int) – control parameter of the nearest neighbor component

- n_components (int) – dimensionality of the embedding space

- n_jobs (int) – number of parallel jobs

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.LLE_SMOTE()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@INPROCEEDINGS{lle_smote, author={Wang, J. and Xu, M. and Wang, H. and Zhang, J.}, booktitle={2006 8th international Conference on Signal Processing}, title={Classification of Imbalanced Data by Using the SMOTE Algorithm and Locally Linear Embedding}, year={2006}, volume={3}, number={}, pages={}, keywords={artificial intelligence; biomedical imaging;medical computing; imbalanced data classification; SMOTE algorithm; locally linear embedding; medical imaging intelligence; synthetic minority oversampling technique; high-dimensional data; low-dimensional space; Biomedical imaging; Back;Training data; Data mining;Biomedical engineering; Research and development; Electronic mail;Pattern recognition; Performance analysis; Classification algorithms}, doi={10.1109/ICOSP.2006.345752}, ISSN={2164-5221}, month={Nov}}

- Notes:

- There might be numerical issues if the nearest neighbors contain

- some element multiple times.

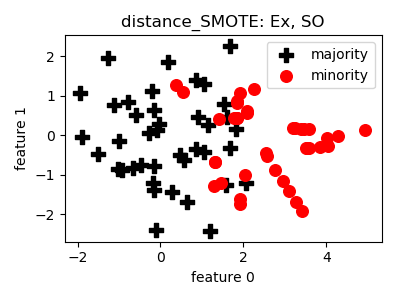

distance_SMOTE¶

API¶

-

class

smote_variants.distance_SMOTE(proportion=1.0, n_neighbors=5, n_jobs=1, random_state=None)[source]¶ -

__init__(proportion=1.0, n_neighbors=5, n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object

Parameters: - proportion (float) – proportion of the difference of n_maj and n_min to sample e.g. 1.0 means that after sampling the number of minority samples will be equal to the number of majority samples

- n_neighbors (int) – control parameter of the nearest neighbor component

- n_jobs (int) – number of parallel jobs

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.distance_SMOTE()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@INPROCEEDINGS{distance_smote, author={de la Calleja, J. and Fuentes, O.}, booktitle={Proceedings of the Twentieth International Florida Artificial Intelligence}, title={A distance-based over-sampling method for learning from imbalanced data sets}, year={2007}, volume={3}, pages={634--635} }

- Notes:

- It is not clear what the authors mean by “weighted distance”.

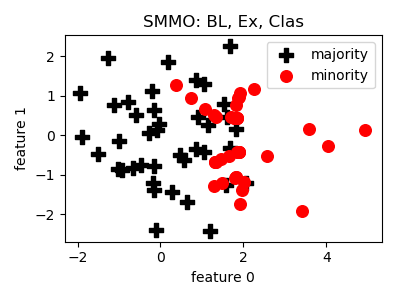

SMMO¶

API¶

-

class

smote_variants.SMMO(proportion=1.0, n_neighbors=5, ensemble=[QuadraticDiscriminantAnalysis(), DecisionTreeClassifier(random_state=2), GaussianNB()], n_jobs=1, random_state=None)[source]¶ -

__init__(proportion=1.0, n_neighbors=5, ensemble=[QuadraticDiscriminantAnalysis(), DecisionTreeClassifier(random_state=2), GaussianNB()], n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object

Parameters: - proportion (float) – proportion of the difference of n_maj and n_min to sample e.g. 1.0 means that after sampling the number of minority samples will be equal to the number of majority samples

- n_neighbors (int) – control parameter of the nearest neighbor component

- ensemble (list) – list of classifiers, if None, default list of classifiers is used

- n_jobs (int) – number of parallel jobs

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.SMMO()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@InProceedings{smmo, author = {de la Calleja, Jorge and Fuentes, Olac and González, Jesús}, booktitle= {Proceedings of the Twenty-First International Florida Artificial Intelligence Research Society Conference}, year = {2008}, month = {01}, pages = {276-281}, title = {Selecting Minority Examples from Misclassified Data for Over-Sampling.} }

- Notes:

- In this paper the ensemble is not specified. I have selected

- some very fast, basic classifiers.

- Also, it is not clear what the authors mean by “weighted distance”.

- The original technique is not prepared for the case when no minority

- samples are classified correctly be the ensemble.

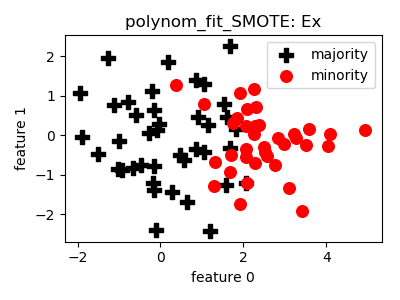

polynom_fit_SMOTE¶

API¶

-

class

smote_variants.polynom_fit_SMOTE(proportion=1.0, topology='star', random_state=None)[source]¶ -

__init__(proportion=1.0, topology='star', random_state=None)[source]¶ Constructor of the sampling object

Parameters: - proportion (float) – proportion of the difference of n_maj and n_min to sample e.g. 1.0 means that after sampling the number of minority samples will be equal to the number of majority samples

- topoplogy (str) – ‘star’/’bus’/’mesh’

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.polynom_fit_SMOTE()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@INPROCEEDINGS{polynomial_fit_smote, author={Gazzah, S. and Amara, N. E. B.}, booktitle={2008 The Eighth IAPR International Workshop on Document Analysis Systems}, title={New Oversampling Approaches Based on Polynomial Fitting for Imbalanced Data Sets}, year={2008}, volume={}, number={}, pages={677-684}, keywords={curve fitting;learning (artificial intelligence);mesh generation;pattern classification;polynomials;sampling methods;support vector machines; oversampling approach;polynomial fitting function;imbalanced data set;pattern classification task; class-modular strategy;support vector machine;true negative rate; true positive rate;star topology; bus topology;polynomial curve topology;mesh topology;Polynomials; Topology;Support vector machines; Support vector machine classification; Pattern classification;Performance evaluation;Training data;Text analysis;Data engineering;Convergence; writer identification system;majority class;minority class;imbalanced data sets;polynomial fitting functions; class-modular strategy}, doi={10.1109/DAS.2008.74}, ISSN={}, month={Sept},}

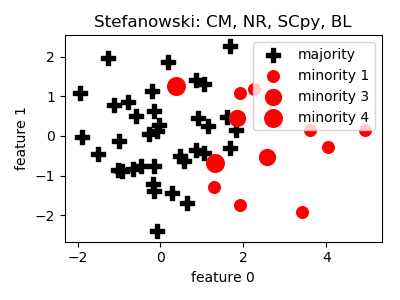

Stefanowski¶

API¶

-

class

smote_variants.Stefanowski(strategy='weak_amp', n_jobs=1, random_state=None)[source]¶ -

__init__(strategy='weak_amp', n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object

Parameters:

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.Stefanowski()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@inproceedings{stefanowski, author = {Stefanowski, Jerzy and Wilk, Szymon}, title = {Selective Pre-processing of Imbalanced Data for Improving Classification Performance}, booktitle = {Proceedings of the 10th International Conference on Data Warehousing and Knowledge Discovery}, series = {DaWaK '08}, year = {2008}, isbn = {978-3-540-85835-5}, location = {Turin, Italy}, pages = {283--292}, numpages = {10}, url = {http://dx.doi.org/10.1007/978-3-540-85836-2_27}, doi = {10.1007/978-3-540-85836-2_27}, acmid = {1430591}, publisher = {Springer-Verlag}, address = {Berlin, Heidelberg}, }

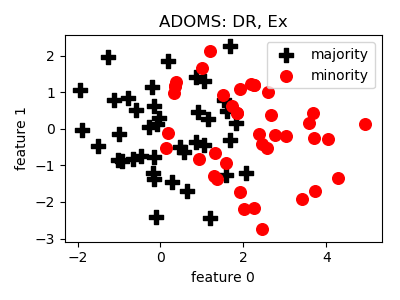

ADOMS¶

API¶

-

class

smote_variants.ADOMS(proportion=1.0, n_neighbors=5, n_jobs=1, random_state=None)[source]¶ -

__init__(proportion=1.0, n_neighbors=5, n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object

Parameters: - proportion (float) – proportion of the difference of n_maj and n_min to sample e.g. 1.0 means that after sampling the number of minority samples will be equal to the number of majority samples

- n_neighbors (int) – parameter of the nearest neighbor component

- n_jobs (int) – number of parallel jobs

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.ADOMS()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@INPROCEEDINGS{adoms, author={Tang, S. and Chen, S.}, booktitle={2008 International Conference on Information Technology and Applications in Biomedicine}, title={The generation mechanism of synthetic minority class examples}, year={2008}, volume={}, number={}, pages={444-447}, keywords={medical image processing; generation mechanism;synthetic minority class examples;class imbalance problem;medical image analysis;oversampling algorithm; Principal component analysis; Biomedical imaging;Medical diagnostic imaging;Information technology;Biomedical engineering; Noise generators;Concrete;Nearest neighbor searches;Data analysis; Image analysis}, doi={10.1109/ITAB.2008.4570642}, ISSN={2168-2194}, month={May}}

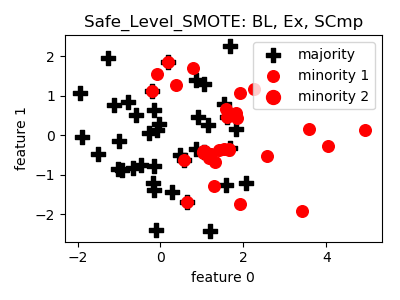

Safe_Level_SMOTE¶

API¶

-

class

smote_variants.Safe_Level_SMOTE(proportion=1.0, n_neighbors=5, n_jobs=1, random_state=None)[source]¶ -

__init__(proportion=1.0, n_neighbors=5, n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object

Parameters: - proportion (float) – proportion of the difference of n_maj and n_min to sample e.g. 1.0 means that after sampling the number of minority samples will be equal to the number of majority samples

- n_neighbors (int) – control parameter of the nearest neighbor component

- n_jobs (int) – number of parallel jobs

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.Safe_Level_SMOTE()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@inproceedings{safe_level_smote, author = { Bunkhumpornpat, Chumphol and Sinapiromsaran, Krung and Lursinsap, Chidchanok}, title = {Safe-Level-SMOTE: Safe-Level-Synthetic Minority Over-Sampling TEchnique for Handling the Class Imbalanced Problem}, booktitle = {Proceedings of the 13th Pacific-Asia Conference on Advances in Knowledge Discovery and Data Mining}, series = {PAKDD '09}, year = {2009}, isbn = {978-3-642-01306-5}, location = {Bangkok, Thailand}, pages = {475--482}, numpages = {8}, url = {http://dx.doi.org/10.1007/978-3-642-01307-2_43}, doi = {10.1007/978-3-642-01307-2_43}, acmid = {1533904}, publisher = {Springer-Verlag}, address = {Berlin, Heidelberg}, keywords = {Class Imbalanced Problem, Over-sampling, SMOTE, Safe Level}, }

- Notes:

- The original method was not prepared for the case when no minority

- sample has minority neighbors.

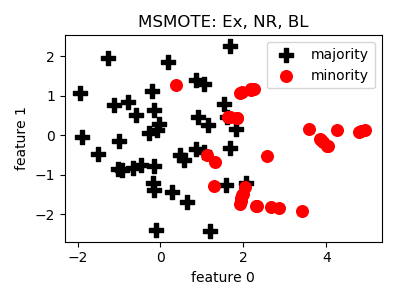

MSMOTE¶

API¶

-

class

smote_variants.MSMOTE(proportion=1.0, n_neighbors=5, n_jobs=1, random_state=None)[source]¶ -

__init__(proportion=1.0, n_neighbors=5, n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object

Parameters: - proportion (float) – proportion of the difference of n_maj and n_min to sample e.g. 1.0 means that after sampling the number of minority samples will be equal to the number of majority samples

- n_neighbors (int) – control parameter of the nearest neighbor component

- n_jobs (int) – number of parallel jobs

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.MSMOTE()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@inproceedings{msmote, author = {Hu, Shengguo and Liang, Yanfeng and Ma, Lintao and He, Ying}, title = {MSMOTE: Improving Classification Performance When Training Data is Imbalanced}, booktitle = {Proceedings of the 2009 Second International Workshop on Computer Science and Engineering - Volume 02}, series = {IWCSE '09}, year = {2009}, isbn = {978-0-7695-3881-5}, pages = {13--17}, numpages = {5}, url = {https://doi.org/10.1109/WCSE.2009.756}, doi = {10.1109/WCSE.2009.756}, acmid = {1682710}, publisher = {IEEE Computer Society}, address = {Washington, DC, USA}, keywords = {imbalanced data, over-sampling, SMOTE, AdaBoost, samples groups, SMOTEBoost}, }

- Notes:

- The original method was not prepared for the case when all

- minority samples are noise.

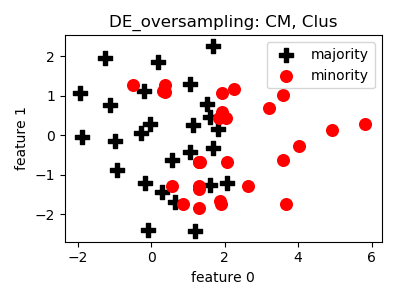

DE_oversampling¶

API¶

-

class

smote_variants.DE_oversampling(proportion=1.0, n_neighbors=5, crossover_rate=0.5, similarity_threshold=0.5, n_clusters=30, n_jobs=1, random_state=None)[source]¶ -

__init__(proportion=1.0, n_neighbors=5, crossover_rate=0.5, similarity_threshold=0.5, n_clusters=30, n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object

Parameters: - proportion (float) – proportion of the difference of n_maj and n_min to sample e.g. 1.0 means that after sampling the number of minority samples will be equal to the number of majority samples

- n_neighbors (int) – control parameter of the nearest neighbor component

- crossover_rate (float) – cross over rate of evoluation

- similarity_threshold (float) – similarity threshold paramter

- n_clusters (int) – number of clusters for cleansing

- n_jobs (int) – number of parallel jobs

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.DE_oversampling()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@INPROCEEDINGS{de_oversampling, author={Chen, L. and Cai, Z. and Chen, L. and Gu, Q.}, booktitle={2010 Third International Conference on Knowledge Discovery and Data Mining}, title={A Novel Differential Evolution-Clustering Hybrid Resampling Algorithm on Imbalanced Datasets}, year={2010}, volume={}, number={}, pages={81-85}, keywords={pattern clustering;sampling methods; support vector machines;differential evolution;clustering algorithm;hybrid resampling algorithm;imbalanced datasets;support vector machine; minority class;mutation operators; crossover operators;data cleaning method;F-measure criterion;ROC area criterion;Support vector machines; Intrusion detection;Support vector machine classification;Cleaning; Electronic mail;Clustering algorithms; Signal to noise ratio;Learning systems;Data mining;Geology;imbalanced datasets;hybrid resampling;clustering; differential evolution;support vector machine}, doi={10.1109/WKDD.2010.48}, ISSN={}, month={Jan},}

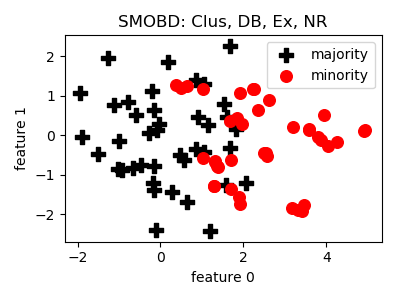

SMOBD¶

API¶

-

class

smote_variants.SMOBD(proportion=1.0, eta1=0.5, t=1.8, min_samples=5, max_eps=1.0, n_jobs=1, random_state=None)[source]¶ -

__init__(proportion=1.0, eta1=0.5, t=1.8, min_samples=5, max_eps=1.0, n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object

Parameters: - proportion (float) – proportion of the difference of n_maj and n_min to sample e.g. 1.0 means that after sampling the number of minority samples will be equal to the number of majority samples

- eta1 (float) – control parameter of density estimation

- t (float) – control parameter of noise filtering

- min_samples (int) – minimum samples parameter for OPTICS

- max_eps (float) – maximum environment radius paramter for OPTICS

- n_jobs (int) – number of parallel jobs

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.SMOBD()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@INPROCEEDINGS{smobd, author={Cao, Q. and Wang, S.}, booktitle={2011 International Conference on Information Management, Innovation Management and Industrial Engineering}, title={Applying Over-sampling Technique Based on Data Density and Cost-sensitive SVM to Imbalanced Learning}, year={2011}, volume={2}, number={}, pages={543-548}, keywords={data handling;learning (artificial intelligence);support vector machines; oversampling technique application; data density;cost sensitive SVM; imbalanced learning;SMOTE algorithm; data distribution;density information; Support vector machines;Classification algorithms;Noise measurement;Arrays; Noise;Algorithm design and analysis; Training;imbalanced learning; cost-sensitive SVM;SMOTE;data density; SMOBD}, doi={10.1109/ICIII.2011.276}, ISSN={2155-1456}, month={Nov},}

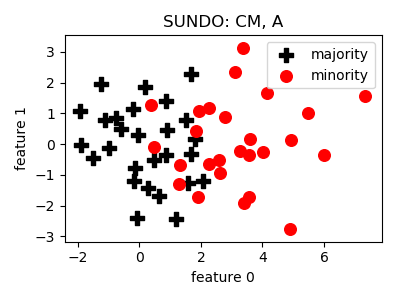

SUNDO¶

API¶

-

class

smote_variants.SUNDO(n_jobs=1, random_state=None)[source]¶ -

__init__(n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object

Parameters: - n_jobs (int) – number of parallel jobs

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.SUNDO()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@INPROCEEDINGS{sundo, author={Cateni, S. and Colla, V. and Vannucci, M.}, booktitle={2011 11th International Conference on Intelligent Systems Design and Applications}, title={Novel resampling method for the classification of imbalanced datasets for industrial and other real-world problems}, year={2011}, volume={}, number={}, pages={402-407}, keywords={decision trees;pattern classification; sampling methods;support vector machines;resampling method;imbalanced dataset classification;industrial problem;real world problem; oversampling technique;undersampling technique;support vector machine; decision tree;binary classification; synthetic dataset;public dataset; industrial dataset;Support vector machines;Training;Accuracy;Databases; Intelligent systems;Breast cancer; Decision trees;oversampling; undersampling;imbalanced dataset}, doi={10.1109/ISDA.2011.6121689}, ISSN={2164-7151}, month={Nov}}

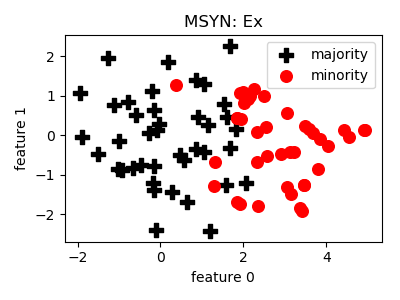

MSYN¶

API¶

-

class

smote_variants.MSYN(pressure=1.5, n_neighbors=5, n_jobs=1, random_state=None)[source]¶ -

__init__(pressure=1.5, n_neighbors=5, n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object

Parameters: - pressure (float) – proportion of the difference of n_maj and n_min to sample e.g. 1.0 means that after sampling the number of minority samples will be equal to the number of majority samples

- n_neighbors (int) – number of neighbors in the SMOTE sampling

- n_jobs (int) – number of parallel jobs

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.MSYN()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@InProceedings{msyn, author="Fan, Xiannian and Tang, Ke and Weise, Thomas", editor="Huang, Joshua Zhexue and Cao, Longbing and Srivastava, Jaideep", title="Margin-Based Over-Sampling Method for Learning from Imbalanced Datasets", booktitle="Advances in Knowledge Discovery and Data Mining", year="2011", publisher="Springer Berlin Heidelberg", address="Berlin, Heidelberg", pages="309--320", abstract="Learning from imbalanced datasets has drawn more and more attentions from both theoretical and practical aspects. Over- sampling is a popular and simple method for imbalanced learning. In this paper, we show that there is an inherently potential risk associated with the over-sampling algorithms in terms of the large margin principle. Then we propose a new synthetic over sampling method, named Margin-guided Synthetic Over-sampling (MSYN), to reduce this risk. The MSYN improves learning with respect to the data distributions guided by the margin-based rule. Empirical study verities the efficacy of MSYN.", isbn="978-3-642-20847-8" }

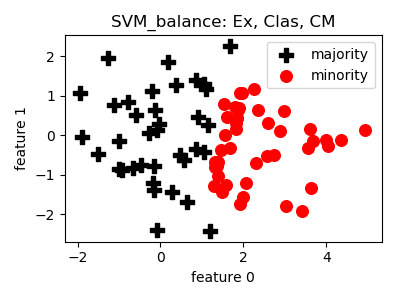

SVM_balance¶

API¶

-

class

smote_variants.SVM_balance(proportion=1.0, n_neighbors=5, n_jobs=1, random_state=None)[source]¶ -

__init__(proportion=1.0, n_neighbors=5, n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object

Parameters: - proportion (float) – proportion of the difference of n_maj and n_min to sample e.g. 1.0 means that after sampling the number of minority samples will be equal to the number of majority samples

- n_neighbors (int) – number of neighbors in the SMOTE sampling

- n_jobs (int) – number of parallel jobs

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.SVM_balance()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@article{svm_balance, author = {Farquad, M.A.H. and Bose, Indranil}, title = {Preprocessing Unbalanced Data Using Support Vector Machine}, journal = {Decis. Support Syst.}, issue_date = {April, 2012}, volume = {53}, number = {1}, month = apr, year = {2012}, issn = {0167-9236}, pages = {226--233}, numpages = {8}, url = {http://dx.doi.org/10.1016/j.dss.2012.01.016}, doi = {10.1016/j.dss.2012.01.016}, acmid = {2181554}, publisher = {Elsevier Science Publishers B. V.}, address = {Amsterdam, The Netherlands, The Netherlands}, keywords = {COIL data, Hybrid method, Preprocessor, SVM, Unbalanced data}, }

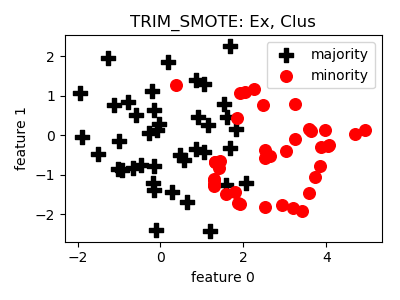

TRIM_SMOTE¶

API¶

-

class

smote_variants.TRIM_SMOTE(proportion=1.0, n_neighbors=5, min_precision=0.3, n_jobs=1, random_state=None)[source]¶ -

__init__(proportion=1.0, n_neighbors=5, min_precision=0.3, n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object

Parameters: - proportion (float) – proportion of the difference of n_maj and n_min to sample e.g. 1.0 means that after sampling the number of minority samples will be equal to the number of majority samples

- n_jobs (int) – number of parallel jobs

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

determine_splitting_point(X, y, split_on_border=False)[source]¶ Determines the splitting point.

Parameters: - X (np.matrix) – a subset of the training data

- y (np.array) – an array of target labels

- split_on_border (bool) – wether splitting on class borders is considered

Returns: - (splitting feature, splitting value),

make the split

Return type:

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

classmethod

parameter_combinations(raw=False)[source]¶ Generates reasonable paramter combinations.

Returns: a list of meaningful paramter combinations Return type: list(dict)

-

precision(y)[source]¶ Determines the precision value.

Parameters: y (np.array) – array of target labels Returns: the precision value Return type: float

-

Example¶

>>> oversampler= smote_variants.TRIM_SMOTE()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@InProceedings{trim_smote, author="Puntumapon, Kamthorn and Waiyamai, Kitsana", editor="Tan, Pang-Ning and Chawla, Sanjay and Ho, Chin Kuan and Bailey, James", title="A Pruning-Based Approach for Searching Precise and Generalized Region for Synthetic Minority Over-Sampling", booktitle="Advances in Knowledge Discovery and Data Mining", year="2012", publisher="Springer Berlin Heidelberg", address="Berlin, Heidelberg", pages="371--382", isbn="978-3-642-30220-6" }

- Notes:

- It is not described precisely how the filtered data is used for

- sample generation. The method is proposed to be a preprocessing step, and it states that it applies sample generation to each group extracted.

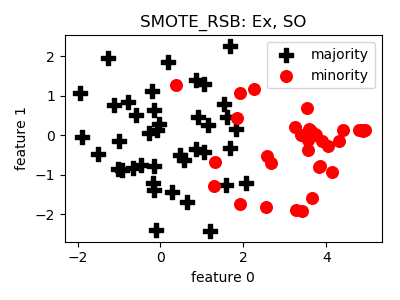

SMOTE_RSB¶

API¶

-

class

smote_variants.SMOTE_RSB(proportion=2.0, n_neighbors=5, n_jobs=1, random_state=None)[source]¶ -

__init__(proportion=2.0, n_neighbors=5, n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object

Parameters: - proportion (float) – proportion of the difference of n_maj and n_min to sample e.g. 1.0 means that after sampling the number of minority samples will be equal to the number of majority samples

- n_neighbors (int) – number of neighbors in the SMOTE sampling

- n_jobs (int) – number of parallel jobs

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.SMOTE_RSB()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@Article{smote_rsb, author="Ramentol, Enislay and Caballero, Yail{'e} and Bello, Rafael and Herrera, Francisco", title="SMOTE-RSB*: a hybrid preprocessing approach based on oversampling and undersampling for high imbalanced data-sets using SMOTE and rough sets theory", journal="Knowledge and Information Systems", year="2012", month="Nov", day="01", volume="33", number="2", pages="245--265", issn="0219-3116", doi="10.1007/s10115-011-0465-6", url="https://doi.org/10.1007/s10115-011-0465-6" }

- Notes:

- I think the description of the algorithm in Fig 5 of the paper

- is not correct. The set “resultSet” is initialized with the original instances, and then the While loop in the Algorithm run until resultSet is empty, which never holds. Also, the resultSet is only extended in the loop. Our implementation is changed in the following way: we generate twice as many instances are required to balance the dataset, and repeat the loop until the number of new samples added to the training set is enough to balance the dataset.

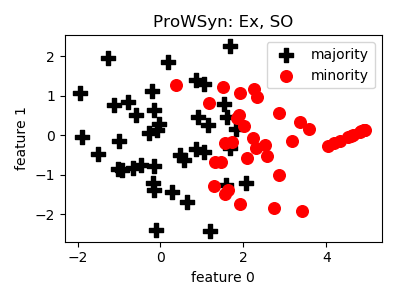

ProWSyn¶

API¶

-

class

smote_variants.ProWSyn(proportion=1.0, n_neighbors=5, L=5, theta=1.0, n_jobs=1, random_state=None)[source]¶ -

__init__(proportion=1.0, n_neighbors=5, L=5, theta=1.0, n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object

Parameters: - proportion (float) – proportion of the difference of n_maj and n_min to sample e.g. 1.0 means that after sampling the number of minority samples will be equal to the number of majority samples

- n_neighbors (int) – number of neighbors in nearest neighbors component

- L (int) – number of levels

- theta (float) – smoothing factor in weight formula

- n_jobs (int) – number of parallel jobs

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.ProWSyn()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@InProceedings{prowsyn, author="Barua, Sukarna and Islam, Md. Monirul and Murase, Kazuyuki", editor="Pei, Jian and Tseng, Vincent S. and Cao, Longbing and Motoda, Hiroshi and Xu, Guandong", title="ProWSyn: Proximity Weighted Synthetic Oversampling Technique for Imbalanced Data Set Learning", booktitle="Advances in Knowledge Discovery and Data Mining", year="2013", publisher="Springer Berlin Heidelberg", address="Berlin, Heidelberg", pages="317--328", isbn="978-3-642-37456-2" }

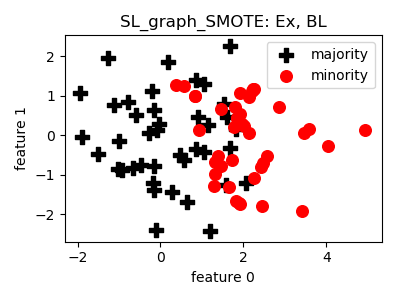

SL_graph_SMOTE¶

API¶

-

class

smote_variants.SL_graph_SMOTE(proportion=1.0, n_neighbors=5, n_jobs=1, random_state=None)[source]¶ -

__init__(proportion=1.0, n_neighbors=5, n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object

Parameters: - proportion (float) – proportion of the difference of n_maj and n_min to sample e.g. 1.0 means that after sampling the number of minority samples will be equal to the number of majority samples

- n_neighbors (int) – number of neighbors in nearest neighbors component

- n_jobs (int) – number of parallel jobs

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.SL_graph_SMOTE()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@inproceedings{sl_graph_smote, author = {Bunkhumpornpat, Chumpol and Subpaiboonkit, Sitthichoke}, booktitle= {13th International Symposium on Communications and Information Technologies}, year = {2013}, month = {09}, pages = {570-575}, title = {Safe level graph for synthetic minority over-sampling techniques}, isbn = {978-1-4673-5578-0} }

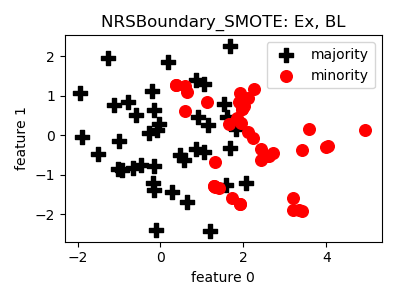

NRSBoundary_SMOTE¶

API¶

-

class

smote_variants.NRSBoundary_SMOTE(proportion=1.0, n_neighbors=5, w=0.005, n_jobs=1, random_state=None)[source]¶ -

__init__(proportion=1.0, n_neighbors=5, w=0.005, n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object

Parameters: - proportion (float) – proportion of the difference of n_maj and n_min to sample e.g. 1.0 means that after sampling the number of minority samples will be equal to the number of majority samples

- n_neighbors (int) – number of neighbors in nearest neighbors component

- w (float) – used to set neighborhood radius

- n_jobs (int) – number of parallel jobs

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.NRSBoundary_SMOTE()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@Article{nrsboundary_smote, author= {Feng, Hu and Hang, Li}, title= {A Novel Boundary Oversampling Algorithm Based on Neighborhood Rough Set Model: NRSBoundary-SMOTE}, journal= {Mathematical Problems in Engineering}, year= {2013}, pages= {10}, doi= {10.1155/2013/694809}, url= {http://dx.doi.org/10.1155/694809} }

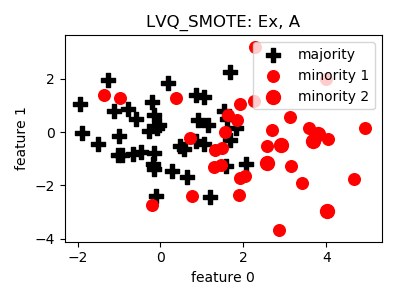

LVQ_SMOTE¶

API¶

-

class

smote_variants.LVQ_SMOTE(proportion=1.0, n_neighbors=5, n_clusters=10, n_jobs=1, random_state=None)[source]¶ -

__init__(proportion=1.0, n_neighbors=5, n_clusters=10, n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object

Parameters: - proportion (float) – proportion of the difference of n_maj and n_min to sample e.g. 1.0 means that after sampling the number of minority samples will be equal to the number of majority samples

- n_neighbors (int) – number of neighbors in nearest neighbors component

- n_clusters (int) – number of clusters in vector quantization

- n_jobs (int) – number of parallel jobs

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.LVQ_SMOTE()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@inproceedings{lvq_smote, title={LVQ-SMOTE – Learning Vector Quantization based Synthetic Minority Over–sampling Technique for biomedical data}, author={Munehiro Nakamura and Yusuke Kajiwara and Atsushi Otsuka and Haruhiko Kimura}, booktitle={BioData Mining}, year={2013} }

- Notes:

- This implementation is only a rough approximation of the method

- described in the paper. The main problem is that the paper uses many datasets to find similar patterns in the codebooks and replicate patterns appearing in other datasets to the imbalanced datasets based on their relative position compared to the codebook elements. What we do is clustering the minority class to extract a codebook as kmeans cluster means, then, find pairs of codebook elements which have the most similar relative position to a randomly selected pair of codebook elements, and translate nearby minority samples from the neighborhood one pair of codebook elements to the neighborood of another pair of codebook elements.

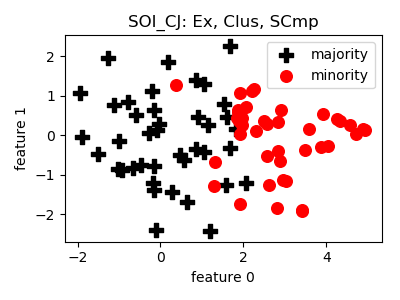

SOI_CJ¶

API¶

-

class

smote_variants.SOI_CJ(proportion=1.0, n_neighbors=5, method='interpolation', n_jobs=1, random_state=None)[source]¶ -

__init__(proportion=1.0, n_neighbors=5, method='interpolation', n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object

Parameters: - proportion (float) – proportion of the difference of n_maj and n_min to sample e.g. 1.0 means that after sampling the number of minority samples will be equal to the number of majority samples

- n_neighbors (int) – number of nearest neighbors in the SMOTE sampling

- method (str) – ‘interpolation’/’jittering’

- n_jobs (int) – number of parallel jobs

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

clustering(X, y)[source]¶ Implementation of the clustering technique described in the paper.

Parameters: - X (np.matrix) – array of training instances

- y (np.array) – target labels

Returns: list of minority clusters

Return type:

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.SOI_CJ()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@article{soi_cj, author = {Sánchez, Atlántida I. and Morales, Eduardo and Gonzalez, Jesus}, year = {2013}, month = {01}, pages = {}, title = {Synthetic Oversampling of Instances Using Clustering}, volume = {22}, booktitle = {International Journal of Artificial Intelligence Tools} }

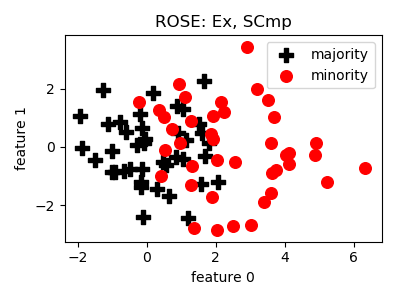

ROSE¶

API¶

-

class

smote_variants.ROSE(proportion=1.0, random_state=None)[source]¶ -

__init__(proportion=1.0, random_state=None)[source]¶ Constructor of the sampling object

Parameters: - proportion (float) – proportion of the difference of n_maj and n_min to sample e.g. 1.0 means that after sampling the number of minority samples will be equal to the number of majority samples

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.ROSE()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@Article{rose, author="Menardi, Giovanna and Torelli, Nicola", title="Training and assessing classification rules with imbalanced data", journal="Data Mining and Knowledge Discovery", year="2014", month="Jan", day="01", volume="28", number="1", pages="92--122", issn="1573-756X", doi="10.1007/s10618-012-0295-5", url="https://doi.org/10.1007/s10618-012-0295-5" }

- Notes:

- It is not entirely clear if the authors propose kernel density

- estimation or the fitting of simple multivariate Gaussians on the minority samples. The latter seems to be more likely, I implement that approach.

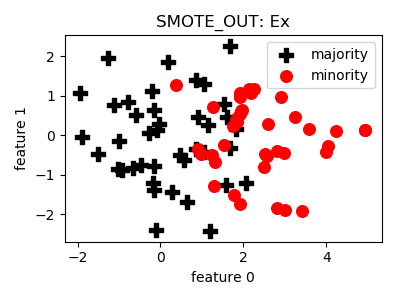

SMOTE_OUT¶

API¶

-

class

smote_variants.SMOTE_OUT(proportion=1.0, n_neighbors=5, n_jobs=1, random_state=None)[source]¶ -

__init__(proportion=1.0, n_neighbors=5, n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object

Parameters: - proportion (float) – proportion of the difference of n_maj and n_min to sample e.g. 1.0 means that after sampling the number of minority samples will be equal to the number of majority samples

- n_neighbors (int) – parameter of the NearestNeighbors component

- n_jobs (int) – number of parallel jobs

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.SMOTE_OUT()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@article{smote_out_smote_cosine_selected_smote, title={SMOTE-Out, SMOTE-Cosine, and Selected-SMOTE: An enhancement strategy to handle imbalance in data level}, author={Fajri Koto}, journal={2014 International Conference on Advanced Computer Science and Information System}, year={2014}, pages={280-284} }

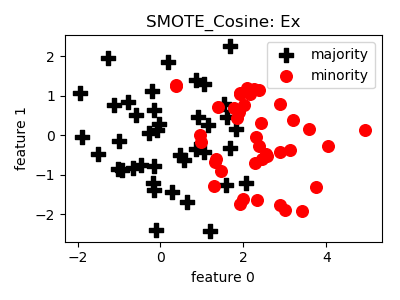

SMOTE_Cosine¶

API¶

-

class

smote_variants.SMOTE_Cosine(proportion=1.0, n_neighbors=5, n_jobs=1, random_state=None)[source]¶ -

__init__(proportion=1.0, n_neighbors=5, n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object

Parameters: - proportion (float) – proportion of the difference of n_maj and n_min to sample e.g. 1.0 means that after sampling the number of minority samples will be equal to the number of majority samples

- n_neighbors (int) – parameter of the NearestNeighbors component

- n_jobs (int) – number of parallel jobs

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.SMOTE_Cosine()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@article{smote_out_smote_cosine_selected_smote, title={SMOTE-Out, SMOTE-Cosine, and Selected-SMOTE: An enhancement strategy to handle imbalance in data level}, author={Fajri Koto}, journal={2014 International Conference on Advanced Computer Science and Information System}, year={2014}, pages={280-284} }

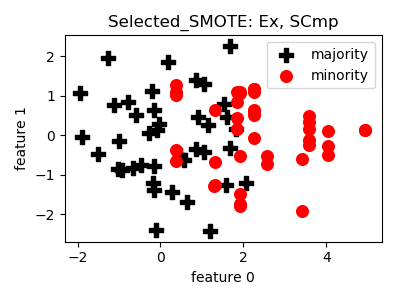

Selected_SMOTE¶

API¶

-

class

smote_variants.Selected_SMOTE(proportion=1.0, n_neighbors=5, perc_sign_attr=0.5, n_jobs=1, random_state=None)[source]¶ -

__init__(proportion=1.0, n_neighbors=5, perc_sign_attr=0.5, n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object

Parameters: - strategy (float) – proportion of the difference of n_maj and n_min to sample e.g. 1.0 means that after sampling the number of minority samples will be equal to the number of majority samples

- n_neighbors (int) – parameter of the NearestNeighbors component

- perc_sign_attr (float) – [0,1] - percentage of significant attributes

- n_jobs (int) – number of parallel jobs

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.Selected_SMOTE()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

- BibTex:

- @article{smote_out_smote_cosine_selected_smote,

- title={SMOTE-Out, SMOTE-Cosine, and Selected-SMOTE: An

- enhancement strategy to handle imbalance in data level},

author={Fajri Koto}, journal={2014 International Conference on Advanced

Computer Science and Information System},year={2014}, pages={280-284}

}

- Notes:

- Significant attribute selection was not described in the paper,

- therefore we have implemented something meaningful.

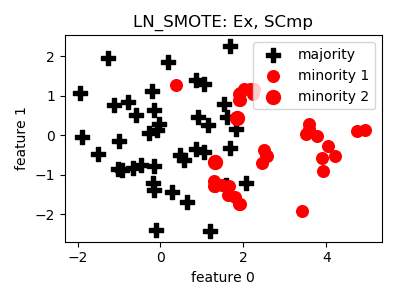

LN_SMOTE¶

API¶

-

class

smote_variants.LN_SMOTE(proportion=1.0, n_neighbors=5, n_jobs=1, random_state=None)[source]¶ -

__init__(proportion=1.0, n_neighbors=5, n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object

Parameters: - proportion (float) – proportion of the difference of n_maj and n_min to sample e.g. 1.0 means that after sampling the number of minority samples will be equal to the number of majority samples

- n_neighbors (int) – parameter of the NearestNeighbors component

- n_jobs (int) – number of parallel jobs

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.LN_SMOTE()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@INPROCEEDINGS{ln_smote, author={Maciejewski, T. and Stefanowski, J.}, booktitle={2011 IEEE Symposium on Computational Intelligence and Data Mining (CIDM)}, title={Local neighbourhood extension of SMOTE for mining imbalanced data}, year={2011}, volume={}, number={}, pages={104-111}, keywords={Bayes methods;data mining;pattern classification;local neighbourhood extension;imbalanced data mining; focused resampling technique;SMOTE over-sampling method;naive Bayes classifiers;Noise measurement;Noise; Decision trees;Breast cancer; Sensitivity;Data mining;Training}, doi={10.1109/CIDM.2011.5949434}, ISSN={}, month={April}}

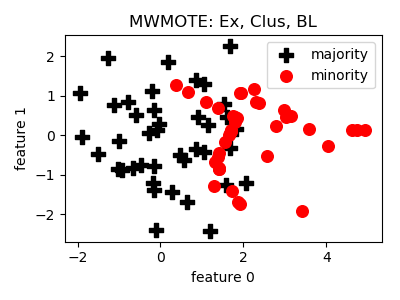

MWMOTE¶

API¶

-

class

smote_variants.MWMOTE(proportion=1.0, k1=5, k2=5, k3=5, M=10, cf_th=5.0, cmax=10.0, n_jobs=1, random_state=None)[source]¶ -

__init__(proportion=1.0, k1=5, k2=5, k3=5, M=10, cf_th=5.0, cmax=10.0, n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object

Parameters: - proportion (float) – proportion of the difference of n_maj and n_min to sample e.g. 1.0 means that after sampling the number of minority samples will be equal to the number of majority samples

- k1 (int) – parameter of the NearestNeighbors component

- k2 (int) – parameter of the NearestNeighbors component

- k3 (int) – parameter of the NearestNeighbors component

- M (int) – number of clusters

- cf_th (float) – cutoff threshold

- cmax (float) – maximum closeness value

- n_jobs (int) – number of parallel jobs

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.MWMOTE()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@ARTICLE{mwmote, author={Barua, S. and Islam, M. M. and Yao, X. and Murase, K.}, journal={IEEE Transactions on Knowledge and Data Engineering}, title={MWMOTE--Majority Weighted Minority Oversampling Technique for Imbalanced Data Set Learning}, year={2014}, volume={26}, number={2}, pages={405-425}, keywords={learning (artificial intelligence);pattern clustering;sampling methods;AUC;area under curve;ROC;receiver operating curve;G-mean; geometric mean;minority class cluster; clustering approach;weighted informative minority class samples;Euclidean distance; hard-to-learn informative minority class samples;majority class;synthetic minority class samples;synthetic oversampling methods;imbalanced learning problems; imbalanced data set learning; MWMOTE-majority weighted minority oversampling technique;Sampling methods; Noise measurement;Boosting;Simulation; Complexity theory;Interpolation;Abstracts; Imbalanced learning;undersampling; oversampling;synthetic sample generation; clustering}, doi={10.1109/TKDE.2012.232}, ISSN={1041-4347}, month={Feb}}

- Notes:

- The original method was not prepared for the case of having clusters

- of 1 elements.

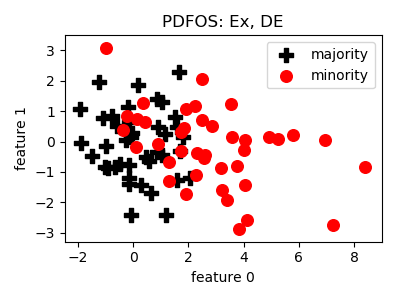

PDFOS¶

API¶

-

class

smote_variants.PDFOS(proportion=1.0, n_jobs=1, random_state=None)[source]¶ -

__init__(proportion=1.0, n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object

Parameters: - proportion (float) – proportion of the difference of n_maj and n_min to sample e.g. 1.0 means that after sampling the number of minority samples will be equal to the number of majority samples

- n_jobs (int) – number of parallel jobs

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.PDFOS()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@article{pdfos, title = "PDFOS: PDF estimation based over-sampling for imbalanced two-class problems", journal = "Neurocomputing", volume = "138", pages = "248 - 259", year = "2014", issn = "0925-2312", doi = "https://doi.org/10.1016/j.neucom.2014.02.006", author = "Ming Gao and Xia Hong and Sheng Chen and Chris J. Harris and Emad Khalaf", keywords = "Imbalanced classification, Probability density function based over-sampling, Radial basis function classifier, Orthogonal forward selection, Particle swarm optimisation" }

- Notes:

- Not prepared for low-rank data.

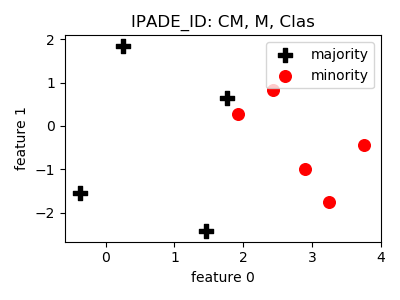

IPADE_ID¶

API¶

-

class

smote_variants.IPADE_ID(F=0.1, G=0.1, OT=20, max_it=40, dt_classifier=DecisionTreeClassifier(random_state=2), base_classifier=DecisionTreeClassifier(random_state=2), n_jobs=1, random_state=None)[source]¶ -

__init__(F=0.1, G=0.1, OT=20, max_it=40, dt_classifier=DecisionTreeClassifier(random_state=2), base_classifier=DecisionTreeClassifier(random_state=2), n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object

Parameters: - F (float) – control parameter of differential evolution

- G (float) – control parameter of the evolution

- OT (int) – number of optimizations

- max_it (int) – maximum number of iterations for DE_optimization

- dt_classifier (obj) – decision tree classifier object

- base_classifier (obj) – classifier object

- n_jobs (int) – number of parallel jobs

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.IPADE_ID()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@article{ipade_id, title = "Addressing imbalanced classification with instance generation techniques: IPADE-ID", journal = "Neurocomputing", volume = "126", pages = "15 - 28", year = "2014", note = "Recent trends in Intelligent Data Analysis Online Data Processing", issn = "0925-2312", doi = "https://doi.org/10.1016/j.neucom.2013.01.050", author = "Victoria López and Isaac Triguero and Cristóbal J. Carmona and Salvador García and Francisco Herrera", keywords = "Differential evolution, Instance generation, Nearest neighbor, Decision tree, Imbalanced datasets" }

- Notes:

- According to the algorithm, if the addition of a majority sample

- doesn’t improve the AUC during the DE optimization process, the addition of no further majority points is tried.

- In the differential evolution the multiplication by a random number

- seems have a deteriorating effect, new scaling parameter added to fix this.

- It is not specified how to do the evaluation.

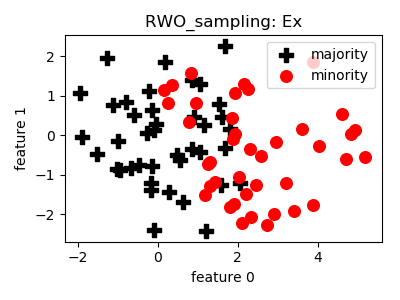

RWO_sampling¶

API¶

-

class

smote_variants.RWO_sampling(proportion=1.0, n_jobs=1, random_state=None)[source]¶ -

__init__(proportion=1.0, n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object

Parameters: - proportion (float) – proportion of the difference of n_maj and n_min to sample e.g. 1.0 means that after sampling the number of minority samples will be equal to the number of majority samples

- n_jobs (int) – number of parallel jobs

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.RWO_sampling()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@article{rwo_sampling, author = {Zhang, Huaxzhang and Li, Mingfang}, year = {2014}, month = {11}, pages = {}, title = {RWO-Sampling: A Random Walk Over-Sampling Approach to Imbalanced Data Classification}, volume = {20}, booktitle = {Information Fusion} }

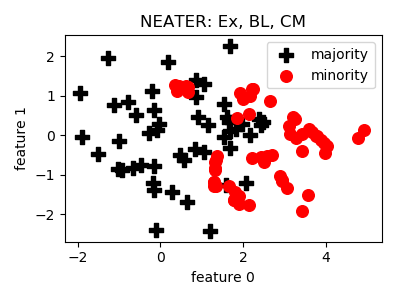

NEATER¶

API¶

-

class

smote_variants.NEATER(proportion=1.0, smote_n_neighbors=5, b=5, alpha=0.1, h=20, n_jobs=1, random_state=None)[source]¶ -

__init__(proportion=1.0, smote_n_neighbors=5, b=5, alpha=0.1, h=20, n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object

Parameters: - proportion (float) – proportion of the difference of n_maj and n_min to sample e.g. 1.0 means that after sampling the number of minority samples will be equal to the number of majority samples

- smote_n_neighbors (int) – number of neighbors in SMOTE sampling

- b (int) – number of neighbors

- alpha (float) – smoothing term

- h (int) – number of iterations in evolution

- n_jobs (int) – number of parallel jobs

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.NEATER()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@INPROCEEDINGS{neater, author={Almogahed, B. A. and Kakadiaris, I. A.}, booktitle={2014 22nd International Conference on Pattern Recognition}, title={NEATER: Filtering of Over-sampled Data Using Non-cooperative Game Theory}, year={2014}, volume={}, number={}, pages={1371-1376}, keywords={data handling;game theory;information filtering;NEATER;imbalanced data problem;synthetic data;filtering of over-sampled data using non-cooperative game theory;Games;Game theory;Vectors; Sociology;Statistics;Silicon; Mathematical model}, doi={10.1109/ICPR.2014.245}, ISSN={1051-4651}, month={Aug}}

- Notes:

- Evolving both majority and minority probabilities as nothing ensures

- that the probabilities remain in the range [0,1], and they need to be normalized.

- The inversely weighted function needs to be cut at some value (like

- the alpha level), otherwise it will overemphasize the utility of having differing neighbors next to each other.

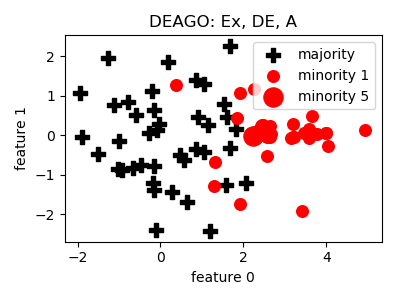

DEAGO¶

API¶

-

class

smote_variants.DEAGO(proportion=1.0, n_neighbors=5, e=100, h=0.3, sigma=0.1, n_jobs=1, random_state=None)[source]¶ -

__init__(proportion=1.0, n_neighbors=5, e=100, h=0.3, sigma=0.1, n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object

Parameters: - proportion (float) – proportion of the difference of n_maj and n_min to sample e.g. 1.0 means that after sampling the number of minority samples will be equal to the number of majority samples

- n_neighbors (int) – number of neighbors

- e (int) – number of epochs

- h (float) – fraction of number of hidden units

- sigma (float) – training noise

- n_jobs (int) – number of parallel jobs

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.DEAGO()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@INPROCEEDINGS{deago, author={Bellinger, C. and Japkowicz, N. and Drummond, C.}, booktitle={2015 IEEE 14th International Conference on Machine Learning and Applications (ICMLA)}, title={Synthetic Oversampling for Advanced Radioactive Threat Detection}, year={2015}, volume={}, number={}, pages={948-953}, keywords={radioactive waste;advanced radioactive threat detection;gamma-ray spectral classification;industrial nuclear facilities;Health Canadas national monitoring networks;Vancouver 2010; Isotopes;Training;Monitoring; Gamma-rays;Machine learning algorithms; Security;Neural networks;machine learning;classification;class imbalance;synthetic oversampling; artificial neural networks; autoencoders;gamma-ray spectra}, doi={10.1109/ICMLA.2015.58}, ISSN={}, month={Dec}}

- Notes:

- There is no hint on the activation functions and amounts of noise.

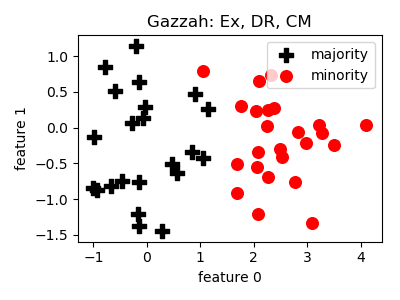

Gazzah¶

API¶

-

class

smote_variants.Gazzah(proportion=1.0, n_components=2, n_jobs=1, random_state=None)[source]¶ -

__init__(proportion=1.0, n_components=2, n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object

Parameters: - proportion (float) – proportion of the difference of n_maj and n_min to sample e.g. 1.0 means that after sampling the number of minority samples will be equal to the number of majority samples

- n_components (int) – number of components in PCA analysis

- n_jobs (int) – number of parallel jobs

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.Gazzah()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@INPROCEEDINGS{gazzah, author={Gazzah, S. and Hechkel, A. and Essoukri Ben Amara, N. }, booktitle={2015 IEEE 12th International Multi-Conference on Systems, Signals Devices (SSD15)}, title={A hybrid sampling method for imbalanced data}, year={2015}, volume={}, number={}, pages={1-6}, keywords={computer vision;image classification; learning (artificial intelligence); sampling methods;hybrid sampling method;imbalanced data; diversification;computer vision domain;classical machine learning systems;intraclass variations; system performances;classification accuracy;imbalanced training data; training data set;over-sampling; minority class;SMOTE star topology; feature vector deletion;intra-class variations;distribution criterion; biometric data;true positive rate; Training data;Principal component analysis;Databases;Support vector machines;Training;Feature extraction; Correlation;Imbalanced data sets; Intra-class variations;Data analysis; Principal component analysis; One-against-all SVM}, doi={10.1109/SSD.2015.7348093}, ISSN={}, month={March}}

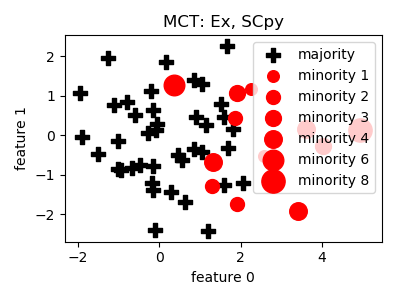

MCT¶

API¶

-

class

smote_variants.MCT(proportion=1.0, n_jobs=1, random_state=None)[source]¶ -

__init__(proportion=1.0, n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object

Parameters: - proportion (float) – proportion of the difference of n_maj and n_min to sample e.g. 1.0 means that after sampling the number of minority samples will be equal to the number of majority samples

- n_jobs (int) – number of parallel jobs

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.MCT()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@article{mct, author = {Jiang, Liangxiao and Qiu, Chen and Li, Chaoqun}, year = {2015}, month = {03}, pages = {1551004}, title = {A Novel Minority Cloning Technique for Cost-Sensitive Learning}, volume = {29}, booktitle = {International Journal of Pattern Recognition and Artificial Intelligence} }

- Notes:

- Mode is changed to median, distance is changed to Euclidean to

- support continuous features, and normalized.

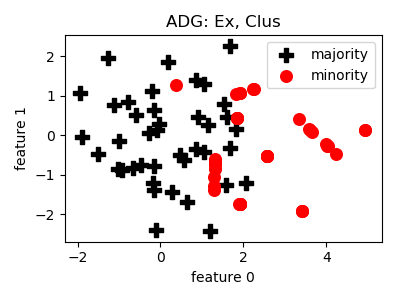

ADG¶

API¶

-

class

smote_variants.ADG(proportion=1.0, kernel='inner', lam=1.0, mu=1.0, k=12, gamma=1.0, n_jobs=1, random_state=None)[source]¶ -

__init__(proportion=1.0, kernel='inner', lam=1.0, mu=1.0, k=12, gamma=1.0, n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object

Parameters: - proportion (float) – proportion of the difference of n_maj and n_min to sample e.g. 1.0 means that after sampling the number of minority samples will be equal to the number of majority samples

- kernel (str) – ‘inner’/’rbf_x’, where x is a float, the bandwidth

- lam (float) – lambda parameter of the method

- mu (float) – mu parameter of the method

- k (int) – number of samples to generate in each iteration

- gamma (float) – gamma parameter of the method

- n_jobs (int) – number of parallel jobs

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.ADG()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@article{adg, author = {Pourhabib, A. and Mallick, Bani K. and Ding, Yu}, year = {2015}, month = {16}, pages = {2695--2724}, title = {A Novel Minority Cloning Technique for Cost-Sensitive Learning}, volume = {16}, journal = {Journal of Machine Learning Research} }

- Notes:

- This method has a lot of parameters, it becomes fairly hard to

- cross-validate thoroughly.

- Fails if matrix is singular when computing alpha_star, fixed

- by PCA.

- Singularity might be caused by repeating samples.

- Maintaining the kernel matrix becomes unfeasible above a couple

- of thousand vectors.

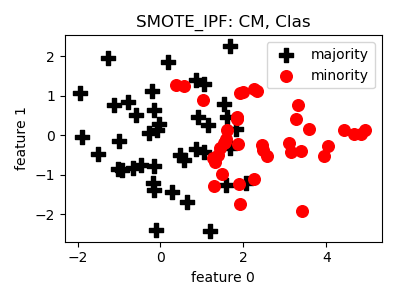

SMOTE_IPF¶

API¶

-

class

smote_variants.SMOTE_IPF(proportion=1.0, n_neighbors=5, n_folds=9, k=3, p=0.01, voting='majority', classifier=DecisionTreeClassifier(random_state=2), n_jobs=1, random_state=None)[source]¶ -

__init__(proportion=1.0, n_neighbors=5, n_folds=9, k=3, p=0.01, voting='majority', classifier=DecisionTreeClassifier(random_state=2), n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object

Parameters: - proportion (float) – proportion of the difference of n_maj and n_min to sample e.g. 1.0 means that after sampling the number of minority samples will be equal to the number of majority samples

- n_neighbors (int) – number of neighbors in SMOTE sampling

- n_folds (int) – the number of partitions

- k (int) – used in stopping condition

- p (float) – percentage value ([0,1]) used in stopping condition

- voting (str) – ‘majority’/’consensus’

- classifier (obj) – classifier object

- n_jobs (int) – number of parallel jobs

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.SMOTE_IPF()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@article{smote_ipf, title = "SMOTE–IPF: Addressing the noisy and borderline examples problem in imbalanced classification by a re-sampling method with filtering", journal = "Information Sciences", volume = "291", pages = "184 - 203", year = "2015", issn = "0020-0255", doi = "https://doi.org/10.1016/j.ins.2014.08.051", author = "José A. Sáez and Julián Luengo and Jerzy Stefanowski and Francisco Herrera", keywords = "Imbalanced classification, Borderline examples, Noisy data, Noise filters, SMOTE" }

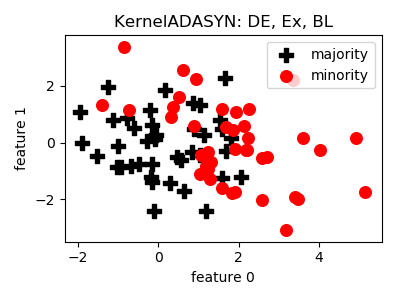

KernelADASYN¶

API¶

-

class

smote_variants.KernelADASYN(proportion=1.0, k=5, h=1.0, n_jobs=1, random_state=None)[source]¶ -

__init__(proportion=1.0, k=5, h=1.0, n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object

Parameters: - proportion (float) – proportion of the difference of n_maj and n_min to sample e.g. 1.0 means that after sampling the number of minority samples will be equal to the number of majority samples

- k (int) – number of neighbors in the nearest neighbors component

- h (float) – kernel bandwidth

- n_jobs (int) – number of parallel jobs

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.KernelADASYN()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@INPROCEEDINGS{kernel_adasyn, author={Tang, B. and He, H.}, booktitle={2015 IEEE Congress on Evolutionary Computation (CEC)}, title={KernelADASYN: Kernel based adaptive synthetic data generation for imbalanced learning}, year={2015}, volume={}, number={}, pages={664-671}, keywords={learning (artificial intelligence); pattern classification; sampling methods;KernelADASYN; kernel based adaptive synthetic data generation;imbalanced learning;standard classification algorithms;data distribution; minority class decision rule; expensive minority class data misclassification;kernel based adaptive synthetic over-sampling approach;imbalanced data classification problems;kernel density estimation methods;Kernel; Estimation;Accuracy;Measurement; Standards;Training data;Sampling methods;Imbalanced learning; adaptive over-sampling;kernel density estimation;pattern recognition;medical and healthcare data learning}, doi={10.1109/CEC.2015.7256954}, ISSN={1089-778X}, month={May}}

- Notes:

- The method of sampling was not specified, Markov Chain Monte Carlo

- has been implemented.

- Not prepared for improperly conditioned covariance matrix.

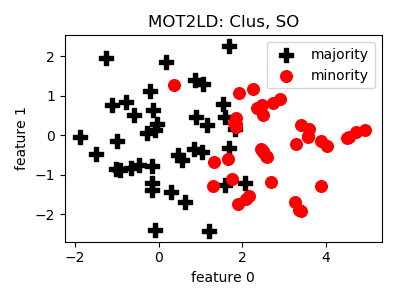

MOT2LD¶

API¶

-

class

smote_variants.MOT2LD(proportion=1.0, n_components=2, k=5, d_cut='auto', n_jobs=1, random_state=None)[source]¶ -

__init__(proportion=1.0, n_components=2, k=5, d_cut='auto', n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object

Parameters: - proportion (float) – proportion of the difference of n_maj and n_min to sample e.g. 1.0 means that after sampling the number of minority samples will be equal to the number of majority samples

- n_components (int) – number of components for stochastic neighborhood embedding

- k (int) – number of neighbors in the nearest neighbor component

- d_cut (float/str) – distance cut value/’auto’ for automated selection

- n_jobs (int) – number of parallel jobs

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.MOT2LD()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@InProceedings{mot2ld, author="Xie, Zhipeng and Jiang, Liyang and Ye, Tengju and Li, Xiaoli", editor="Renz, Matthias and Shahabi, Cyrus and Zhou, Xiaofang and Cheema, Muhammad Aamir", title="A Synthetic Minority Oversampling Method Based on Local Densities in Low-Dimensional Space for Imbalanced Learning", booktitle="Database Systems for Advanced Applications", year="2015", publisher="Springer International Publishing", address="Cham", pages="3--18", isbn="978-3-319-18123-3" }

- Notes:

- Clusters might contain 1 elements, and all points can be filtered

- as noise.

- Clusters might contain 0 elements as well, if all points are filtered

- as noise.

- The entire clustering can become empty.

- TSNE is very slow when the number of instances is over a couple

- of 1000

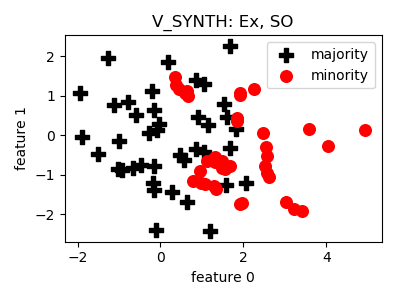

V_SYNTH¶

API¶

-

class

smote_variants.V_SYNTH(proportion=1.0, n_components=3, n_jobs=1, random_state=None)[source]¶ -

__init__(proportion=1.0, n_components=3, n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object

Parameters: - proportion (float) – proportion of the difference of n_maj and n_min to sample e.g. 1.0 means that after sampling the number of minority samples will be equal to the number of majority samples

- n_components (int) – number of components for PCA

- n_jobs (int) – number of parallel jobs

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.V_SYNTH()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@article{v_synth, author = {Young,Ii, William A. and Nykl, Scott L. and Weckman, Gary R. and Chelberg, David M.}, title = {Using Voronoi Diagrams to Improve Classification Performances when Modeling Imbalanced Datasets}, journal = {Neural Comput. Appl.}, issue_date = {July 2015}, volume = {26}, number = {5}, month = jul, year = {2015}, issn = {0941-0643}, pages = {1041--1054}, numpages = {14}, url = {http://dx.doi.org/10.1007/s00521-014-1780-0}, doi = {10.1007/s00521-014-1780-0}, acmid = {2790665}, publisher = {Springer-Verlag}, address = {London, UK, UK}, keywords = {Data engineering, Data mining, Imbalanced datasets, Knowledge extraction, Numerical algorithms, Synthetic over-sampling}, }

- Notes:

- The proposed encompassing bounding box generation is incorrect.

- Voronoi diagram generation in high dimensional spaces is instable

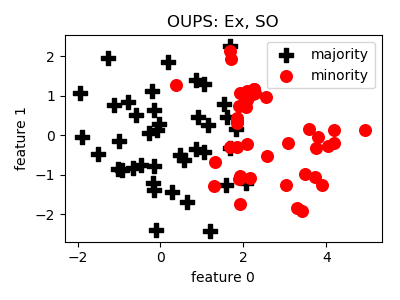

OUPS¶

API¶

-

class

smote_variants.OUPS(proportion=1.0, n_jobs=1, random_state=None)[source]¶ -

__init__(proportion=1.0, n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object

Parameters: - proportion (float) – proportion of the difference of n_maj and n_min to sample e.g. 1.0 means that after sampling the number of minority samples will be equal to the number of majority samples

- n_jobs (int) – number of parallel jobs

- random_state (int/RandomState/None) – initializer of random_state, like in sklearn

-

get_params(deep=False)[source]¶ Returns: the parameters of the current sampling object Return type: dict

-

Example¶

>>> oversampler= smote_variants.OUPS()

>>> X_samp, y_samp= oversampler.sample(X, y)

- References:

BibTex:

@article{oups, title = "A priori synthetic over-sampling methods for increasing classification sensitivity in imbalanced data sets", journal = "Expert Systems with Applications", volume = "66", pages = "124 - 135", year = "2016", issn = "0957-4174", doi = "https://doi.org/10.1016/j.eswa.2016.09.010", author = "William A. Rivera and Petros Xanthopoulos", keywords = "SMOTE, OUPS, Class imbalance, Classification" }

- Notes:

- In the description of the algorithm a fractional number p (j) is

- used to index a vector.

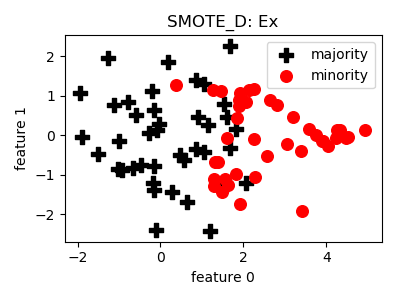

SMOTE_D¶

API¶

-

class

smote_variants.SMOTE_D(proportion=1.0, k=3, n_jobs=1, random_state=None)[source]¶ -

__init__(proportion=1.0, k=3, n_jobs=1, random_state=None)[source]¶ Constructor of the sampling object